Microsoft has introduced a meaningful set of Quality Evaluation Agent capabilities across Dynamics 365 Contact Center and Dynamics 365 Customer Service in 2025 release wave 2. The core evaluation framework in Contact Center became generally available on October 24, 2025, and Customer Service added related capabilities such as evaluating cases, extending criteria, simulation, multilingual criteria, and bulk case evaluation between October 2025 and March 2026.

I think this is one of the more important updates for service organizations, especially if you lead QA, operations, or service delivery.

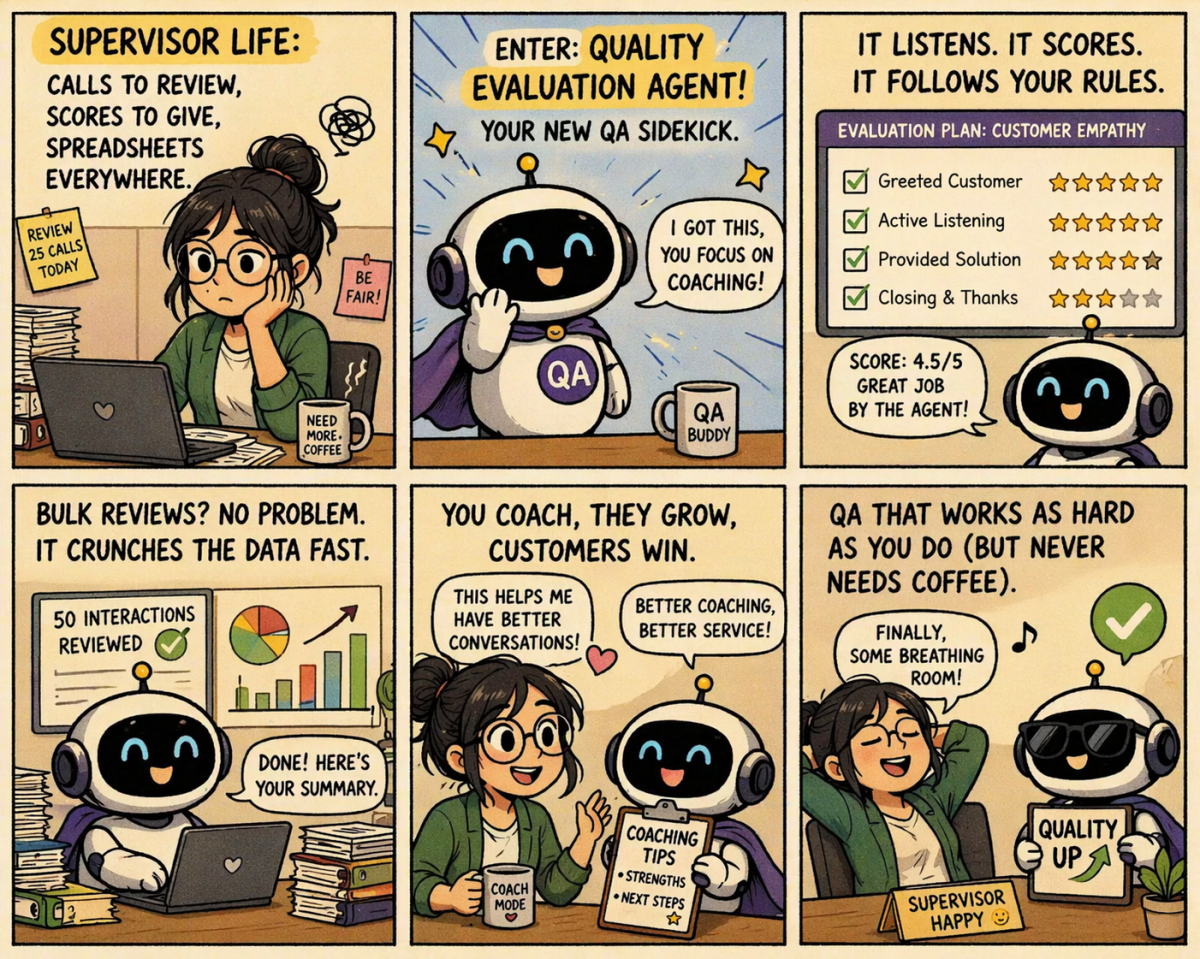

For a long time, quality assurance in service teams has depended heavily on manual reviews, spot checks, and supervisor effort. That works to a point, but it becomes hard to scale as teams grow, channels expand, and expectations around consistency increase.

This is where Quality Evaluation Agent starts to become interesting.

Why this matters

Microsoft positions the evaluation framework as the foundation of its quality management solution. It allows supervisors to define detailed evaluation criteria, create evaluation plans, and automatically assess interactions based on specific conditions. It also supports score calculations using either weighted criteria or equal distribution, and AI supervisors can review scores before they are published.

That is a big shift.

Instead of QA being only a manual activity after the fact, it starts becoming something more structured, repeatable, and easier to operationalize across the organization.

And it is not limited to one type of interaction. Microsoft says the framework can support autonomous and AI-assisted assessments across cases, conversations, emails, and surveys.

What stands out for me

The first thing that stands out is that this is not just “AI scoring.”

Microsoft has built this around a broader framework:

- evaluation criteria

- evaluation plans

- evaluations and outcomes

That matters because good QA is never just about assigning a score. It is about defining what good looks like, applying it consistently, and then using the results to coach, improve, and govern.

The second thing that stands out is the steady expansion of capabilities after the initial release. In Customer Service, Microsoft added support for evaluating cases, extending criteria, managing criteria versions, simulating criteria before publishing, supporting multilingual criteria, and evaluating cases in bulk. These went live across October 24, 2025, February 6, 2026, February 27, 2026, and March 20, 2026.

That tells me Microsoft is not treating this as a one-off feature. It looks more like the start of a broader QA capability set.

Why this is useful for supervisors

From a supervisor perspective, this can help in a few practical ways.

One is consistency.

When quality checks are manual, every reviewer brings some variation. That is normal. But it also makes it harder to compare results fairly across teams, business units, or channels.

Microsoft’s extended criteria model is designed to address that. Supervisors can define a base criteria set for core quality standards and then allow business units to extend those standards with their own requirements. Microsoft also says updates to the source criteria can flow automatically to extended versions, while version history and simulations help validate changes before publishing.

That is a smart model.

It gives organizations a common standard without forcing every team into a rigid one-size-fits-all setup.

The second benefit is scale.

Bulk case evaluation allows supervisors to create evaluation plans directly on case records, run them on a schedule, and target only the right subset of cases based on defined conditions. Microsoft also says each run is logged with history, so teams can see when evaluations ran, which cases were included, and how outcomes changed over time.

That is much better than trying to manage QA through ad hoc spreadsheet tracking and one-off manual reviews.

Why simulation is a big deal

One of my favorite additions here is simulation.

Microsoft added the ability to validate new or revised evaluation criteria against real customer engagement data before broad deployment. Supervisors can test draft or published criteria, review predicted answers and outcomes, rerun simulations, and publish only when they are ready.

This is important because QA criteria are rarely perfect on the first attempt.

In real projects, teams often discover that a question is vague, a scoring rule is too harsh, or a certain type of case behaves differently than expected. Simulation gives supervisors a safer way to tune the model before it starts affecting recurring evaluations.

That is a much more mature way to introduce AI-driven QA.

What this means for service leaders

For service leaders, I think the bigger value is not just better evaluations. It is better control.

You get a stronger ability to:

- standardize quality expectations

- compare performance more consistently

- identify issues earlier

- track quality over time

- support coaching with more structure

And because Microsoft has positioned this across both Contact Center and Customer Service, it is also a sign that QA is becoming a more connected part of the broader service platform, not just a niche supervisor tool.

Good service leadership is not only about handling volume. It is also about maintaining quality at scale.

For supervisors and service leaders, this is definitely a capability worth paying attention to.

Happy integrating!