In case you haven’t followed the news, Microsoft Common Data Service has a new name, Its “Microsoft Dataverse”.

The change is just the name change at this time, behind the scene it’s the same CDS which has powered Dynamics 365 and Power Platform for years.

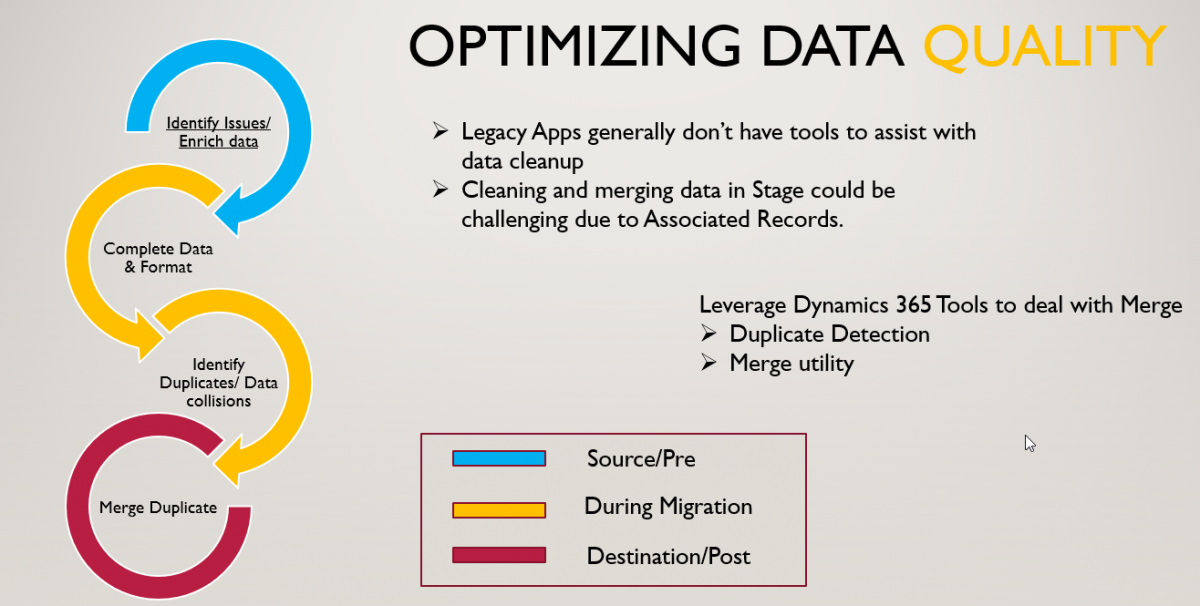

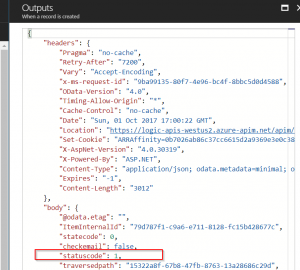

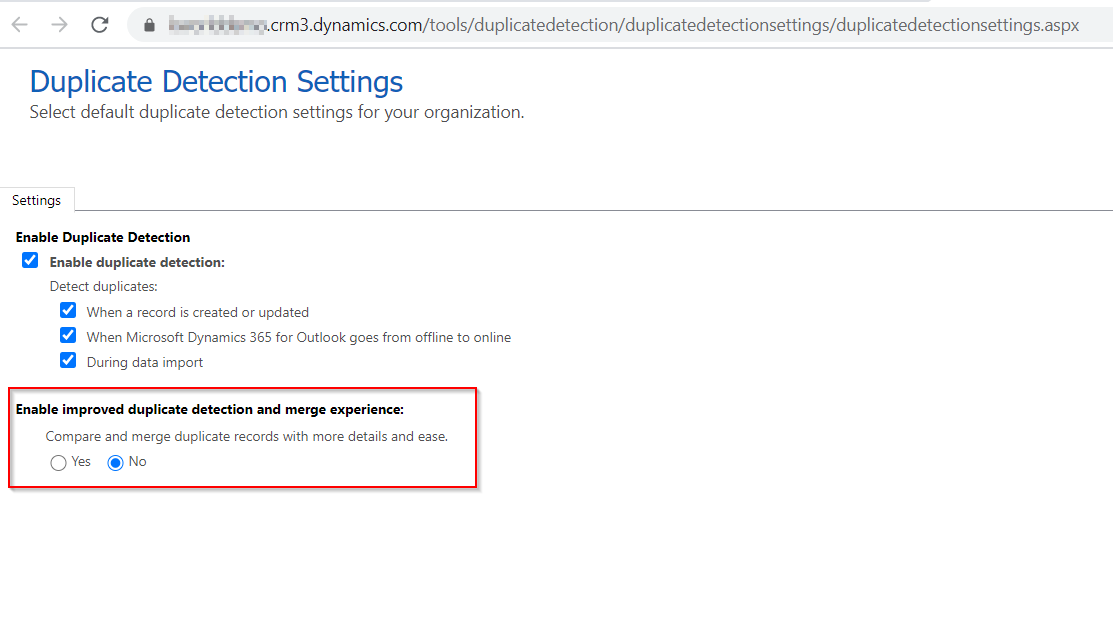

Dataverse Duplicate Detection and merge capabilities were due for a feature update in a long time, The alert and merge prompts were not optimized for UCI and looked out of Place on UCI apps.

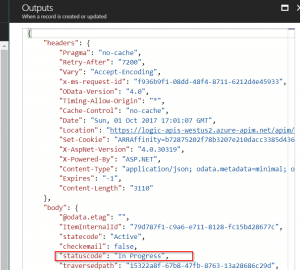

The latest update( Release Wave 2 – 2020) to the platform provides new and improved Duplicate detection and merge capabilities as well as adds UCI compliant forms for merge.

This feature could be enabled by In Power Platform Admin Center under Duplicate Detection settings.

Continue reading “Improved duplicate detection and data merge in Microsoft Dataverse”